How an erroneous ban led me to discover a thriving ecosystem of better (and cheaper) alternatives

About two months ago, I woke up to find myself completely locked out of all Anthropic services via my personal account. No warning, no specific explanation—just a generic ban notification and a refund for my account. I had been using Claude for like a year regularly. As a security professional, I had been using Claude for legitimate research and development work. Apparently, that was enough to trigger Anthropic’s heavy-handed moderation system, although I’m uncertain why they chose that particular date as I’d been doing the same things the entire time I was subscribed.

Welcome to appeals hell.

The Ban

I work in security. I use AI tools to help with threat modeling, code review, security documentation, and general research. I sometimes use them to assist with bug bounty or a particularly tricky part of a Hack the Box machine. Sometimes I even use the letters h, a, c, and k in the same sentence. Nothing illegal, nothing even against the Anthropic terms of service—just the day-to-day work on side projects, training, and learning of someone trying to keep systems secure, and, I might add, the same thing the Anthropic security team is doing. But somewhere in Anthropic’s automated moderation pipeline, my legitimate security work got flagged as a violation.

The ban was comprehensive. Claude.ai? Gone. Anthropic API access? Terminated. Claude Desktop? Logged out and non-functional. They even banned my phone number so I couldn’t sign up for a new account. Every service I had been paying for, every workflow I had built around these tools—all inaccessible in an instant. I guess at least they were kind enough to refund my subscription (but didn’t refund my API funds, however).

The Appeals Process (Aka the black hole)

I filed an appeal. That was almost two months ago.

The response? Crickets, mostly. The only thing I’ve gotten at all was an automated message telling me I’d been banned for “violations of our terms of service” and that they were refunding my subscription amount.

Here’s the kicker: a few weeks into my appeals saga, Anthropic quietly launched an appeals form for security professionals. I’m clearly not the only security professional caught in this dragnet. When you need to create a dedicated appeals process for an entire professional category, maybe—just maybe—your moderation system is overcorrecting.

I get it. Anthropic is trying to prevent misuse of their platform. Security tools can be dual-use, and I understand the need for caution. They’re tired of “Claude Code hacks website” headlines. But when you’re banning legitimate professionals doing legitimate work, you’re not just losing customers—you’re creating a motivation engine for people to find and promote alternatives.

And find them I did.

The Silver Lining: You Need Anthropic Less Than You Think

Getting banned forced me to explore the broader AI ecosystem. What I discovered was eye-opening: not only are there viable alternatives to every Anthropic service I was using, but many of them are significantly better in terms of cost, flexibility, and—critically—less arbitrary moderation.

OpenCode: The Claude Code Replacement

Claude Code was quickly becoming my go-to for agentic coding tasks. After the ban, I discovered OpenCode, and honestly, I’m never going back, at least for personal projects.

OpenCode is essentially Claude Code but with a crucial difference: you bring your own API keys. This means you can use any model from any provider, not just Claude. More importantly, you’re not subject to Anthropic’s consumer-facing moderation system.

The real game-changer is pairing OpenCode with OpenCode Zen, which gives you access to cheaper, high-performing models like MiniMax and Kimi. I’m talking about tasks that would burn through $10+ of Claude tokens costing around $0.10 with these alternatives. That’s not a typo—we’re talking about 1/100th the price for nearly identical, or very close, results. And to be honest, it’s enough cheaper that even if the opencode model spends twice, three times, or even ten times the time working on a problem, you’re still saving money by an order of magnitude.

Yes, you can also integrate OpenCode with OpenRouter for access to Claude models (more on that below), but I’ve found using Zen with MiniMax or Kimi is more cost-effective for most opencode tasks.

OpenRouter: API Access Without Big Brother or the Drama

For direct API access, OpenRouter has become my new standard. It’s an API aggregator that provides access to dozens of models from multiple providers—including all the Claude models.

That’s right: even if you’re banned from Anthropic’s direct API, you can still access Claude Haiku, Claude Sonnet, and Claude Opus through OpenRouter. It’s like being banned from the Apple Store but realizing Best Buy is selling the same products.

Beyond Claude, OpenRouter gives you access to a slew of other models if you desire. One API, multiple providers, competitive pricing comparable to buying direct from the model providers, and no arbitrary bans because you’re a security professional and Anthropic’s automated ban system was vibing off that day. If you really love Claude Code, you can even use it with OpenRouter.

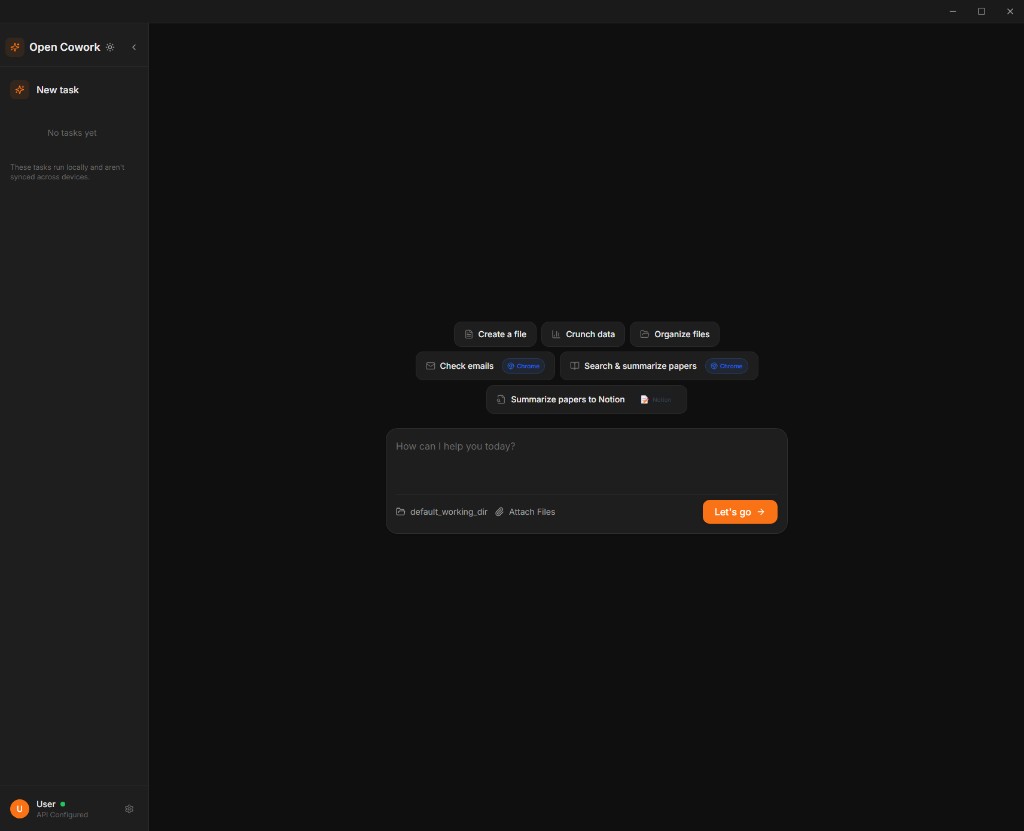

Open Cowork: The Claude Desktop Alternative

Claude Desktop and its newer Claude Cowork mode were incredibly useful for general tasks, document work, brainstorming, and quick queries. After the ban, I found Open Cowork, an open-source alternative that replicates the functionality.

Open Cowork is basically Claude Desktop/Cowork but decoupled from Anthropic’s ecosystem. You plug in your own API keys—whether from OpenRouter, Anthropic (if you know, you’re one of the people who still have access), or other providers—and you’re off to the races. The interface is familiar, the functionality is comparable, and crucially, there’s no separate moderation layer that might ban you for asking security-related questions.

It integrates particularly well with OpenRouter, so you can access any model you want through a clean desktop interface without worrying about your account getting nuked from orbit because you breathed suspiciously.

Lessons Learned

Getting banned was frustrating. The appeals process is terrible. But forcing myself to explore alternatives revealed an important truth: vendor lock-in is a choice, not a necessity.

I had built my workflows around Anthropic’s ecosystem because it was convenient and—at the time—seemingly best-in-class. But convenience came with hidden costs: opaque, inconsistent, seemingly vibes-based moderation, no due process, and pricing that I now realize was inflated compared to alternatives.

For Other Security Professionals

If you work in security and use AI tools in your personal learning and research, you’re probably at risk of the same fate. Luckily Anthropic doesn’t seem to mind so much if you’re on an enterprise account with your company, probably because:

Conclusion

I’m still in appeals hell. Maybe Anthropic will eventually realize their mistake and reinstate my account. Maybe they won’t. At this point, I’m not sure I care that much.

The ban forced me to discover an ecosystem of tools that are more flexible, more affordable, and more respectful of legitimate security work. I’m spending less money, getting comparable or better results, and not constantly worried that my next query will trigger some opaque moderation flag.

So in a weird way, thanks for the ban, Anthropic. You did me a favor.